Model Context Protocol (MCP) and Agent-to-Agent (A2A) have gained significant industry attention in the past year. MCP first caught the world’s attention in dramatic fashion when it was published by Anthropic in November 2024, garnering tens of thousands of stars on GitHub within its first month. Organizations quickly understood the value of MCP as a way to abstract APIs into natural language, allowing LLM to easily interpret and use them as tools. In April 2025, Google introduced A2A, providing a new protocol that allows agents to discover each other’s capabilities and enables rapid growth and scaling of agent systems.

Both are Linux Foundation compliant protocols and are designed for agent systems, but their adoption curves have differed significantly. MCP saw rapid adoption, while A2A’s progress was rather slow. This has led to industry commentary suggesting that A2A is quietly fading into the background, with many believing that MCP has become the de facto standard for agent systems.

How do these two protocols compare? Is there really an epic battle going on between MCP and A2A? It will be Blu-ray vs. HD-DVD or VHS vs. Betamax? Well, not exactly. The reality is that while there is some overlap, they operate at different levels of the agent stack, and both are highly relevant.

The MCP is designed to help the LLM understand what external tools are available to them. Prior to MCP, these tools were primarily exposed through APIs. However, LLM’s handling of the raw API is clunky and difficult to scale. LLMs are designed to work in the natural language world, where they interpret a task and identify the right tool that can accomplish it. The API also suffers from issues related to standardization and versioning. For example, if an API is undergoing a version update, how would LLM know about it and use it properly, especially when trying to scale across thousands of APIs? This will quickly become a show. These were exactly the problems MCP was supposed to solve.

Architecturally, MCP works well – up to a point. As the number of tools on the MCP server grows, the tooltips and manifests sent to LLM can be massive and quickly take up the entire prompt popup. This applies to even the largest LLMs, including those supporting hundreds of thousands of tokens. At scale, this becomes a fundamental limitation. Impressive progress has been made recently in reducing the number of tokens used by MCP servers, but even so, MCP scalability limits are likely to remain.

This is where A2A comes in. A2A does not work at the level of tools or tooltips and does not get involved in the details of API abstraction. Instead, A2A introduces the concept of agent cards, which are high-level descriptors that capture the overall capabilities of an agent, rather than explicitly listing the tools or detailed skills that an agent has access to. Additionally, A2A operates solely between agents, meaning it does not have the ability to directly interact with tools or end systems like MCP.

So, which one should you use? Which one is better? Ultimately, the answer is both.

If you’re building a simple agent system with a single supervisor agent and a number of tools it can access, MCP alone may be an ideal choice – as long as the prompt remains compact enough to fit in the LLM context window (which includes the entire prompt budget, including tool diagrams, system instructions, conversation state, loaded documents, and more). However, if you are deploying a multi-agent system, you will very likely need to add A2A to the mix.

Imagine a supervisory agent responsible for handling a request such as “analyze Wi-Fi roaming issues and recommend mitigation strategies.” Instead of directly discovering all possible tools, the A2A supervisor uses A2A to discover specialized agents—such as the RF Analysis Agent, the User Authentication Agent, and the Network Performance Agent—based on their high-level agent cards. Once an appropriate agent is selected, that agent can use the MCP to find and invoke the specific tools it needs. In this flow, A2A provides scalable routing at the agent level, while MCP provides precise execution at the instrument level.

The key point is that A2A can – and often should – be used in concert with MCP. This is not an MCP versus A2A decision; is architectural where both protocols can be used as the system grows and evolves.

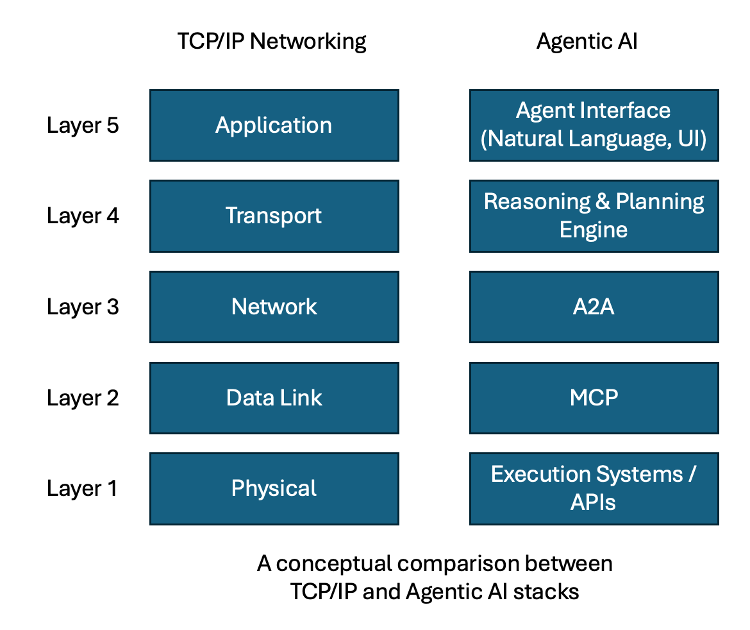

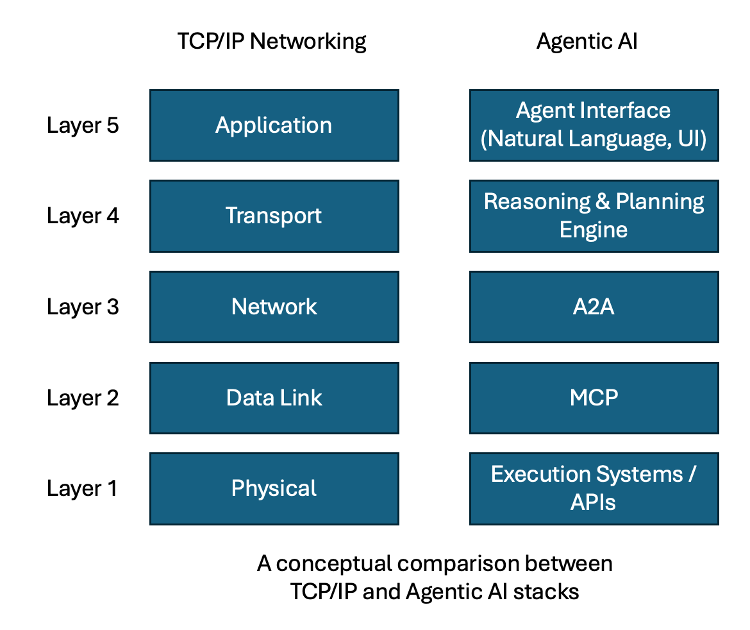

A mental model I like to use comes from the world of networking. In the early days of computer networking, networks were small and self-contained, where a single Layer 2 (data link layer) domain was sufficient. As networks grew and became more interconnected, the limits of Layer 2 were quickly reached, necessitating the introduction of routers and routing protocols—known as Layer 3 (the network layer). Routers act as boundaries for Layer-2 networks, allowing them to interconnect while preventing broadcast traffic from overwhelming the entire system. On a router, networks are described in high-level summary terms rather than revealing all the basic details. In order for a computer to communicate outside its immediate Layer 2 network, it must first discover the nearest router, knowing that its intended destination exists somewhere beyond that boundary.

This is closely related to the relationship between MCP and A2A. MCP is analogous to a Layer 2 network: it provides detailed visibility and direct access, but does not scale to infinity. A2A is similar to a Layer 3 routing boundary that aggregates higher-level capability information and provides a gateway to the rest of the agent network.

The comparison may not be perfect, but it offers an intuitive mental model that resonates with those with a networking background. Just as modern networks are built on both Layer 2 and Layer 3, agent AI systems will eventually require a full stack. In this light, MCP and A2A should not be considered competing standards. Over time, both will likely become critical layers of a larger agent stack as we build increasingly sophisticated AI systems.

The teams that realize this soon will be the ones that successfully scale their agent systems into sustainable production architectures.