This blog was written in collaboration with Fan Bu, Jason Mackay, Borya Sobolev, Dev Khanolkar, Ali Dabir, Puneet Kamal, Li Zhang and Lei Jin.

“Everything is an ensemble”; some are databases

Introduction

Machine data is the foundation of observability and diagnostics in modern computing systems, including logs, metrics, telemetry traces, configuration snapshots, and API response. In practice, this data is fed into calls to create an interleaved composition of natural language instructions and large machine-generated payloads, typically represented as JSON objects or Python/AST literals. While large language models excel at text and code reasoning, they often struggle with machine-generated sequences—especially when they are long, deeply nested, and dominated by repetitive structure.

We repeatedly observe three modes of failure:

- Token explosion from eloquence: Nested keys and repeating schema dominate the context window and fragment the data.

- Context rot: The model lacks the “needle” hidden inside the large payload and escapes the instruction.

- Lack of numerical/categorical sequence reasoning: Long sequences hide patterns such as anomalies, trends, and entity relationships. The bottleneck is not just about the length of inputs. Instead, they require machine data structural transformation and signal enhancement so that the same information is presented in representations consistent with the strengths of the model.

“Everything is an ensemble”; some are databases

Anthropic has successfully popularized the idea that “bash is all you need” for agent workflows, especially for vibration coding, by making full use of bash’s file system and stacking tools. In a machine data-intensive context engineering environment, we argue that the principles of database management apply: rather than forcing the model to process raw blobs directly, full data loads could be stored in a data store, allowing the agent to query them and generate optimized hybrid data views that are consistent with the strong LLM arguments using a subset of simple SQL statements.

Hybrid data views for machine data – “plain SQL is what you need”

These hybrid views are inspired by the hybrid transactional/analytical processing (HTAP) database concept, where different data layouts serve different workloads. Similarly, we maintain hybrid representations of the same payload so that LLMs can more efficiently understand different parts of the data.

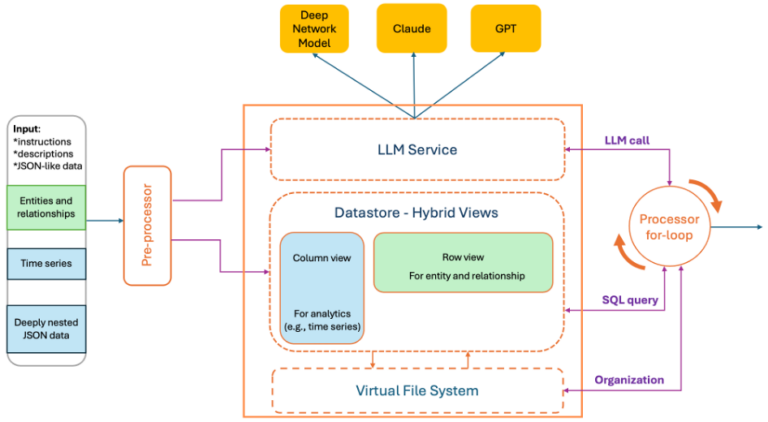

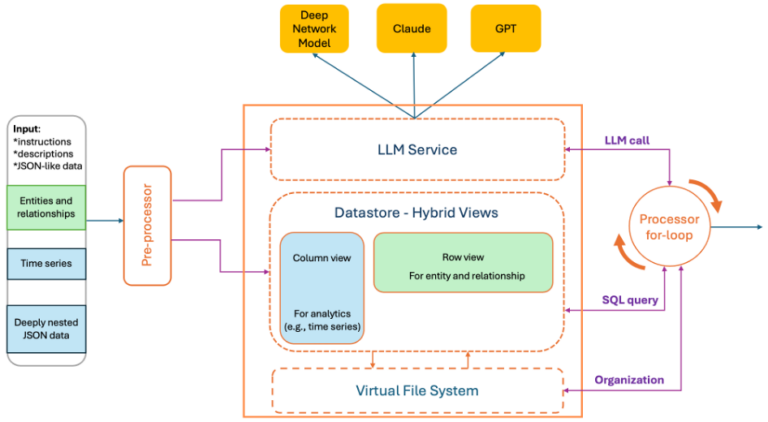

To this end, we introduce ACE (Analytics Context Engineering) for machine data – a framework for creating and managing an analysis context for LLM. ACE combines a virtual file system (mapping observability APIs to files and transparent Bash tool capture to avoid unscalable MCP calls) with the simplicity of Bash for intuitive high-level organization, while incorporating database-style management techniques that allow precise and fine-grained control over low-level data inputs.

Deep Network Model – ACE

ACE is used in the reasoning of the Cisco AI Canvas runbook. It converts raw calls and machine payloads into hybrid views in instruction-preserving contexts that LLMs can reliably consume. ACE was originally designed to enhance the Deep Network Model (DNM), Cisco’s purpose-built LLM for network domains. To support a wider range of LLM models, ACE was subsequently implemented as a separate service.

At a high level:

- The preprocessor parses the user’s prompt—containing natural language and embedded JSON/AST blobs as a single string—and creates hybrid data views along with optional language summaries (such as statistics or anomaly tracing), all within a specified token budget.

- The data store keeps a true copy of the original machine data. This allows the LLM context to remain small while allowing for complete responses.

- The loop processor inspects the output of the LLM and conditionally queries the data store to enrich the response and produce a complete, structured final response.

Row + column orientedviews

We generate complementary representations of the same payload:

- Column view (middle field). For analytical tasks (e.g. line/bar chart, trend, pattern, anomaly detection), we transform nested JSON into flattened dotted paths and sequences by fields. This eliminates repeated prefixes, keeps related data contiguous, and simplifies calculation per field.

- Row oriented view (input oriented). To support relational thinking — such as has-a and is-a relationships, including entity membership and association mining—we provide a row-oriented representation that preserves record boundaries and local context across arrays. Because this view does not enforce natural ordering across rows, it naturally allows the use of statistical methods to order items by relevance. Specifically, we propose a modified TF-IDF algorithm that is based on query relevance, word popularity, and diversity for row sorting.

Plot format: We provide several formats for rendering content. The default format remains JSON; although this is not always the most token-efficient representation, our experience shows that it usually works best with most existing LLMs. In addition, we offer a customized rendering format inspired by the open-source TOON project and Markdown with a few key differences. Depending on the nesting structure of the schema, the data is rendered either as compact flat lists with dotted key paths or using an indented representation. Both approaches help the model to derive structural relationships more effectively.

Concept a hybrid view it is well established in database systems, especially in the distinction between row-oriented and column-oriented storage, where different data layouts are optimized for different workloads. We algorithmically create a parse tree for each JSON/AST literal blob and traverse the tree to selectively transform nodes using an opinion algorithm that determines whether each component is better represented in a row-oriented or column-oriented representation, while maintaining instructional fidelity under strict token constraints.

Design principle

- ACE follows the principle of simplicity and favors a small set of generic tools. It embeds analytics directly into the LLM’s iterative reasoning and execution loop using a limited subset of SQL along with Bash tools via a virtual file system as native data management and analysis mechanisms.

- ACE prioritizes pop-up optimization, maximizing LLM reasoning capacity within limited challenges while maintaining a complete copy of the data in an external data store for query-based access. Carefully designed operators are applied to column views, while evaluation methods are applied to row-oriented views.

In production, this approach drastically reduces prompt size, cost, and inference latency while improving response quality.

Illustrative examples

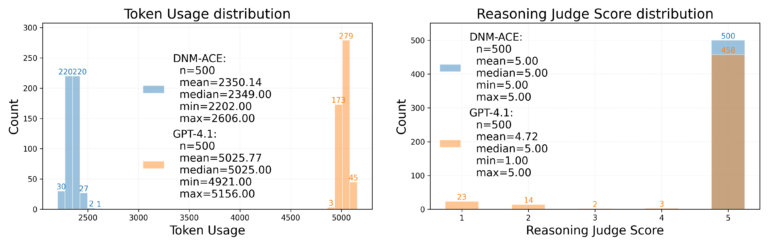

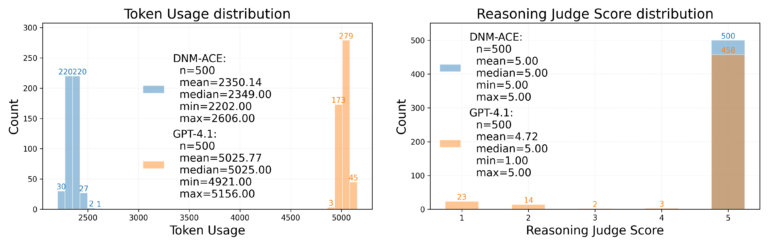

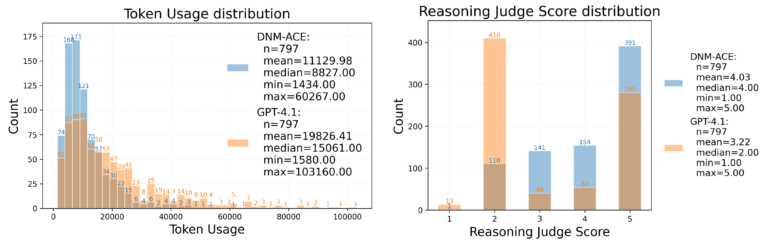

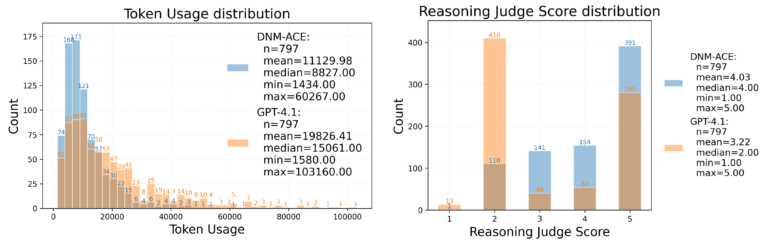

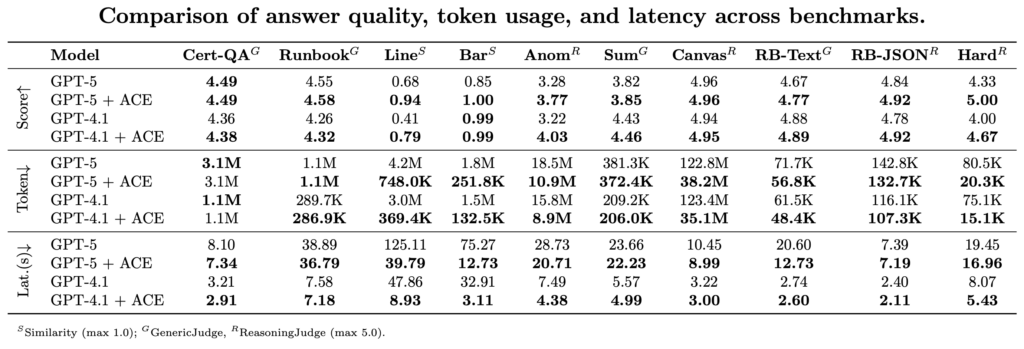

We evaluate token usage and response quality (as measured by the LLM reasoning score as a judge) across representative real-world workloads. Each workload contains independent tasks corresponding to individual steps in the troubleshooting workflow. Because our evaluation focuses on single-step performance, we do not include full agent diagnostic trajectories with tool calls. In addition to significantly reducing token usage, ACE also achieves higher response accuracy.

1. Slot filling:

Network runbook prompts combine JSON-encoded board state and chat instructions, previous variables, tool schemas, and user intent. The challenge is to surface a handful of fields buried in dense, repetitive machinery.

Our approach reduces the average number of tokens from 5,025 to 2,350 and fixes 42 bugs (out of 500 tests) compared to calling GPT-4.1 directly.

2. Anomalous behavior:

The task is to handle a wide range of machine data analysis tasks in observability workflows.

By applying anomaly detection operators to column views to provide additional contextual information, our approach increases the average response quality score from 3.22 to 4.03 (from 5.00), representing a 25% increase in accuracy while achieving a 44% reduction in token usage across 797 samples.

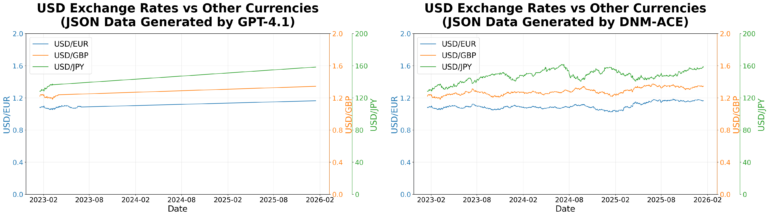

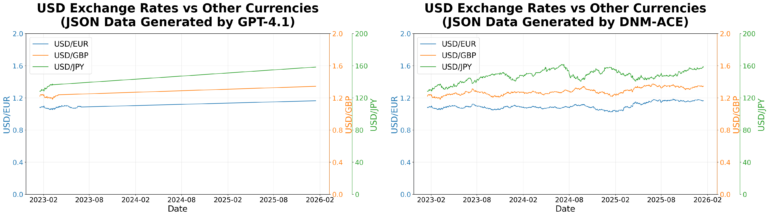

3. Line graph:

The input typically consists of time series metric data, which are arrays of measurement records collected at regular intervals. The challenge is to plot this data using frontend graphing libraries.

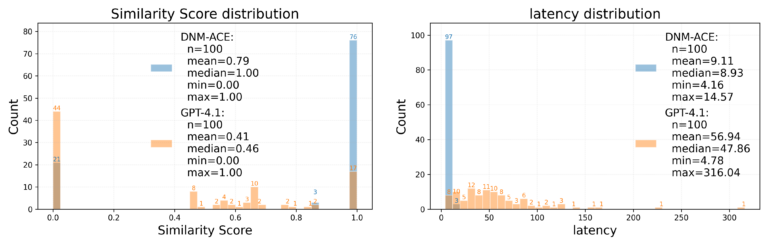

Calling LLM directly often results in incomplete data rendering due to long output sequences, even if the input fits in the popup. In the figure above, LLM produces a line plot with only 40-120 points per series instead of the expected 778, resulting in missing data points. For 100 test samples, as shown in the following two figures, our approach achieves approximately 87% token savings, reduces the average end-to-end latency from 47.8 s to 8.9, and improves the response quality score (similarity_total) from 0.410 to 0.786 (from 1.00).

4. Benchmark Summary:

In addition to the three examples above, we compare key performance metrics across a range of network-related tasks in the following table.

comments: Extensive testing across a wide variety of benchmarks shows that ACE reduces token usage by 20-90% depending on the task, while maintaining and in many cases improving response accuracy. In practice, it effectively provides this “unrestricted” popup window for machine data prompts.

The above assessment only covers individual steps within the agent workflow. Design principles based on virtual file system and database management enable ACE to interact with the LLM reasoning process by extracting meaningful signals from the vast amount of observability data through multi-turn interactions.